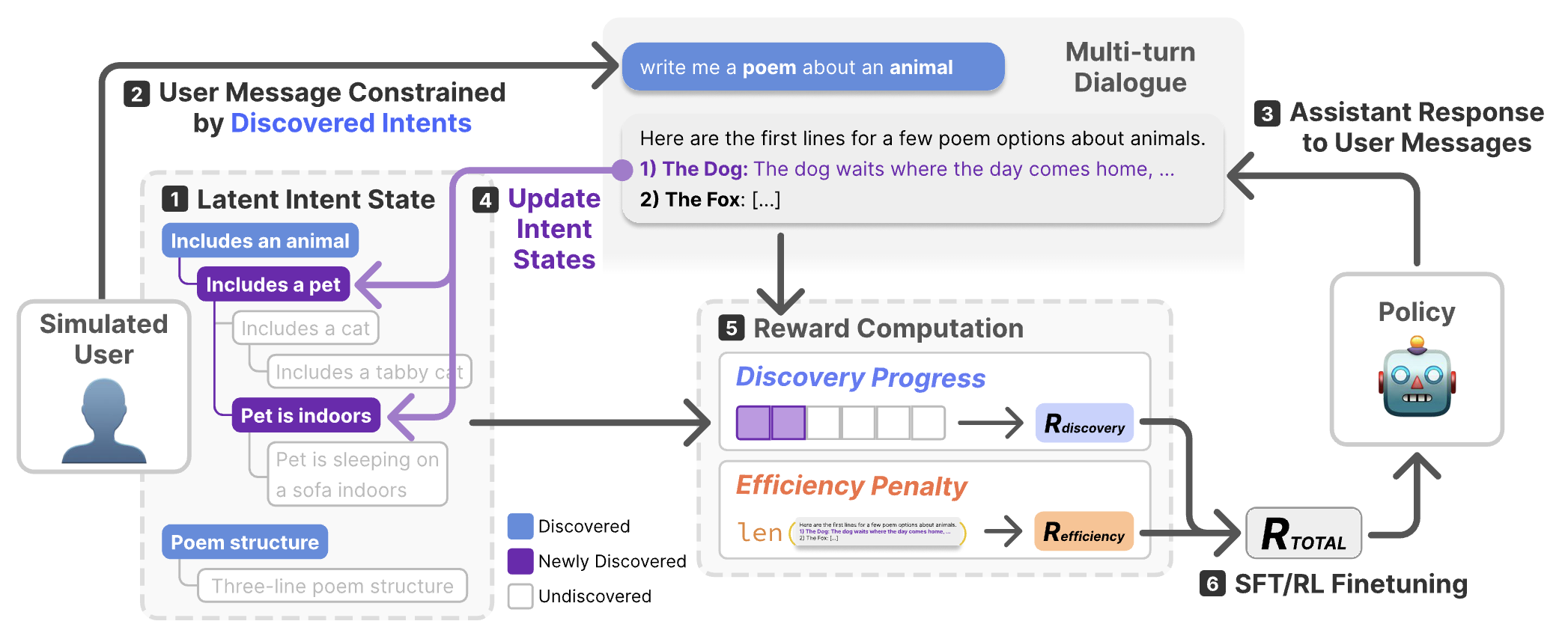

DiscoverLLM: From Executing Intents to Discovering Them

DiscoverLLM introduces a framework that trains LLMs to help users form and discover their intents through interaction. By using a novel user simulator that models cognitive states with a progressive hierarchy of intents, the framework trains models to handle ambiguous requests by surfacing relevant options that guide users toward concretizing what they want.

Evalet: Evaluating Large Language Models by Fragmenting Outputs into Functions

Evalet addresses the opacity of LLM-based evaluations by proposing functional fragmentation: dissecting model outputs into key text fragments and interpreting the rhetoric functions each serves relative to evaluation criteria. This interactive system visualizes these fragment-level functions across multiple outputs, empowering practitioners to transparently inspect, rate, and compare evaluations at scale.

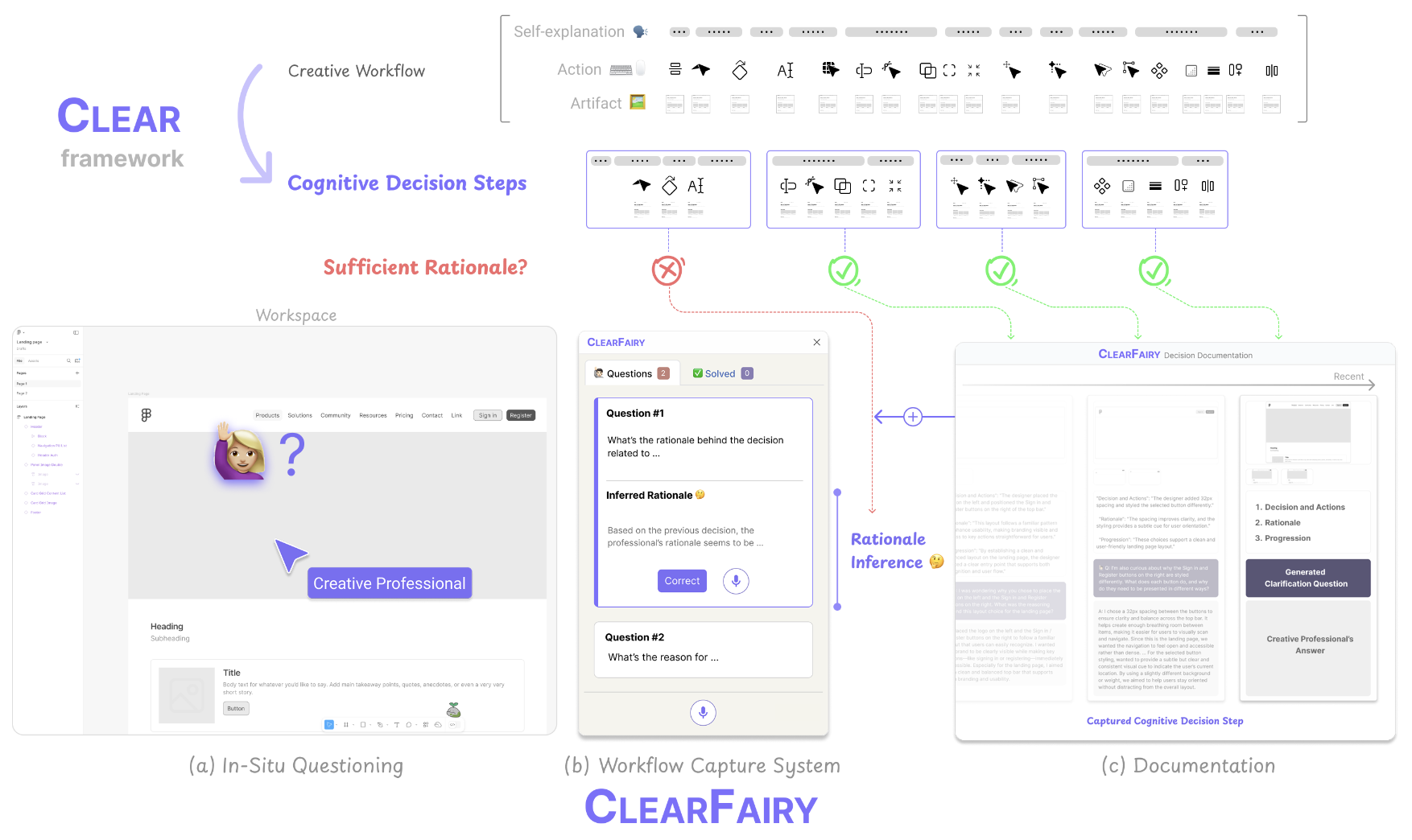

ClearFairy: Capturing Creative Workflows through Decision Structuring, In-Situ Questioning, and Rationale Inference

ClearFairy introduces the CLEAR framework and a think-aloud AI assistant to capture professionals' decision-making processes in creative workflows. By structuring reasoning into cognitive decision steps, the system detects weak explanations, asks lightweight clarifying questions, and infers missing rationales. This approach significantly increases the capture of strong design explanations without adding cognitive burden, while also providing structured data that enhances downstream generative AI agents.

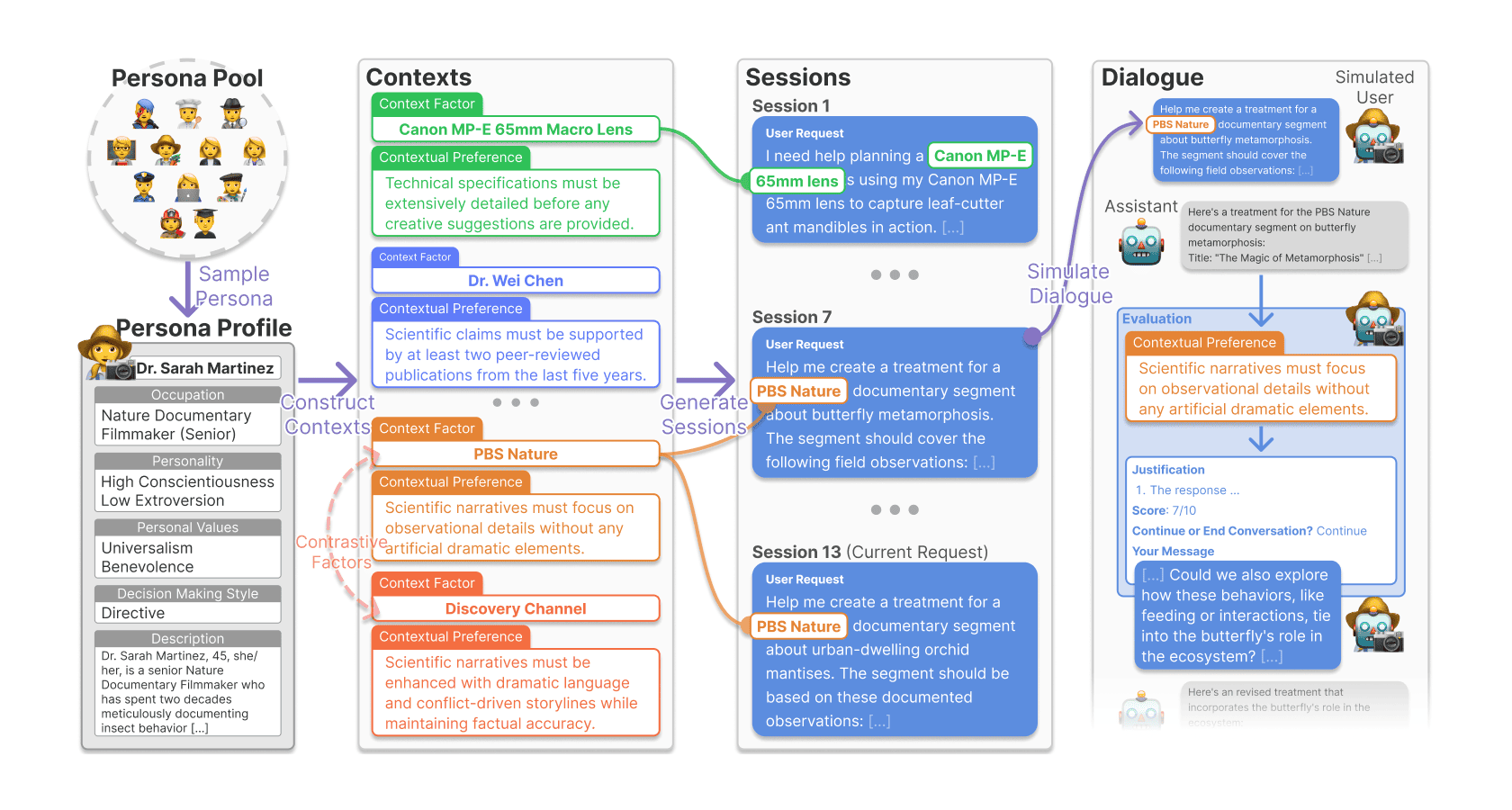

CUPID: Evaluating Personalized and Contextualized Alignment of LLMs from Interactions

CUPID presents a benchmark of 756 human-curated session histories to evaluate an LLM's capability to infer a user's contextual preferences from multi-turn interactions. The work highlights the gap between static alignment and dynamic personalization, revealing that state-of-the-art models struggle to infer shifting preferences and discern relevant context from prior interactions.

One vs. Many: Comprehending Accurate Information from Multiple Erroneous and Inconsistent AI Generations

This work investigates how users comprehend information when presented with multiple, potentially inconsistent, AI-generated outputs. Our experiment finds that while exposure to inconsistencies lowers the perceived capacity of the AI, it simultaneously increases users' comprehension of the generated information, offering design implications for promoting critical and transparent LLM usage.

EvalLM: Interactive Evaluation of Large Language Model Prompts on User-Defined Criteria

EvalLM is an interactive system designed to help developers iteratively refine LLM prompts by evaluating multiple outputs against user-defined criteria. Users describe their criteria in natural language, and the system's LLM-based evaluator provides an overview of where prompts excel or fail, enabling structured prompt engineering and reducing the need for manual inspection.

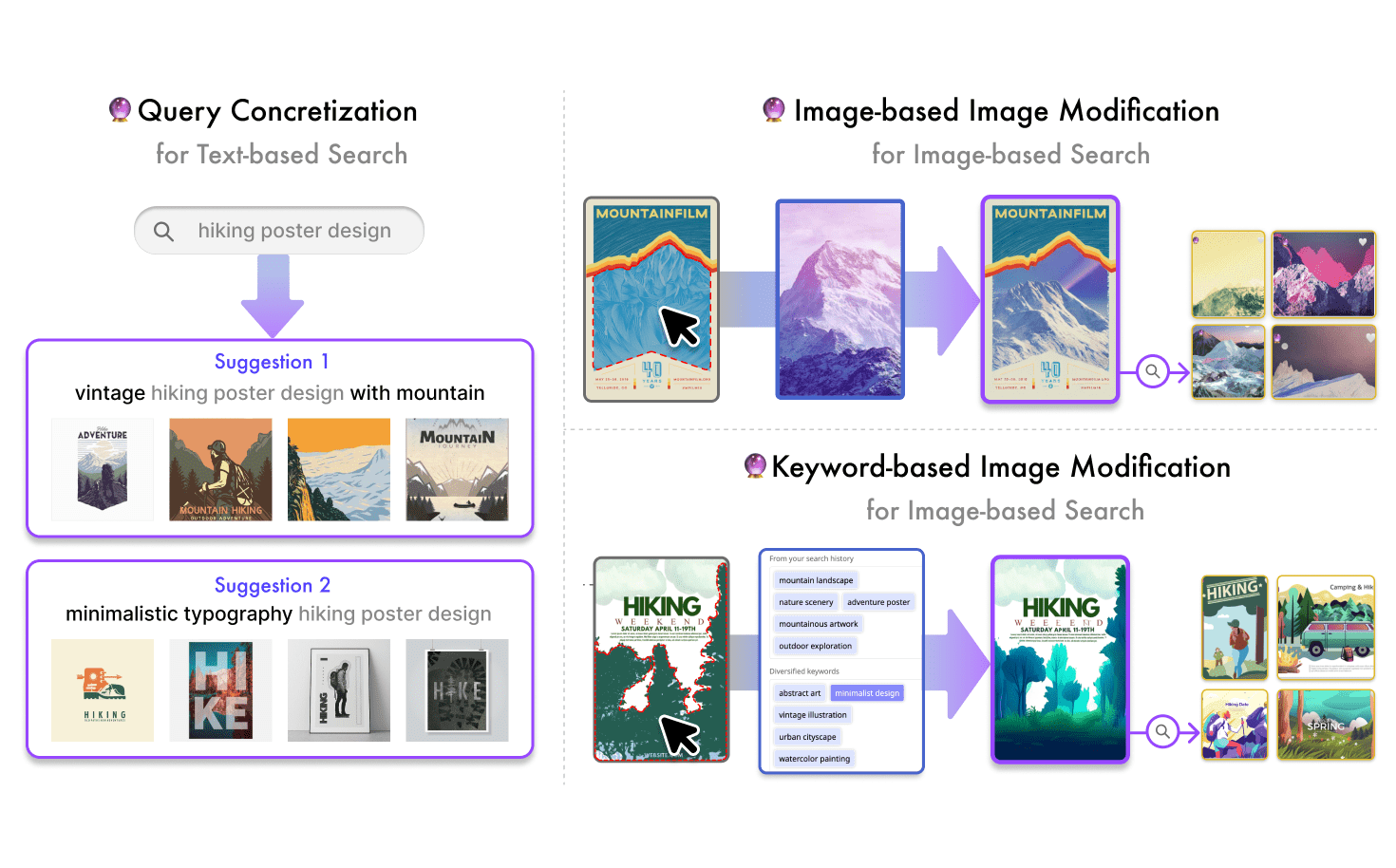

GenQuery: Supporting Expressive Visual Search with Generative Models

GenQuery supports expressive visual search by integrating generative models to help designers articulate and refine abstract search intents. The system enables users to concretize text queries, generatively modify images to use as visual queries, and explore diverse search directions, successfully supporting both convergent and divergent exploration during the creative process.

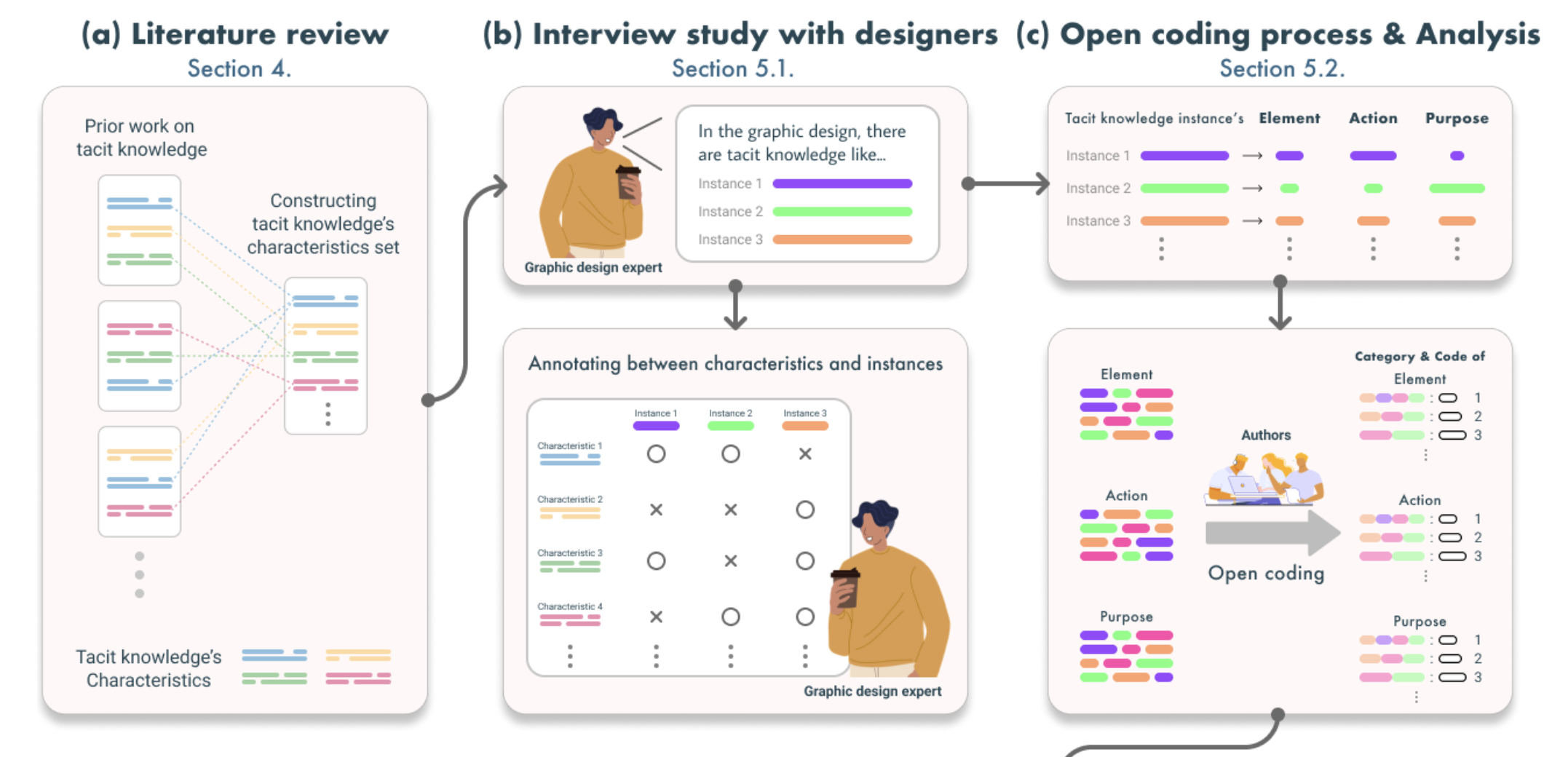

Demystifying Tacit Knowledge in Graphic Design: Characteristics, Instances, Approaches, and Guidelines

This comprehensive study demystifies tacit knowledge in graphic design by collecting and analyzing instances from professional designers. The work identifies the core elements, actions, and purposes of tacit design knowledge, proposing approaches and guidelines to make implicit design expertise more explicit and teachable.

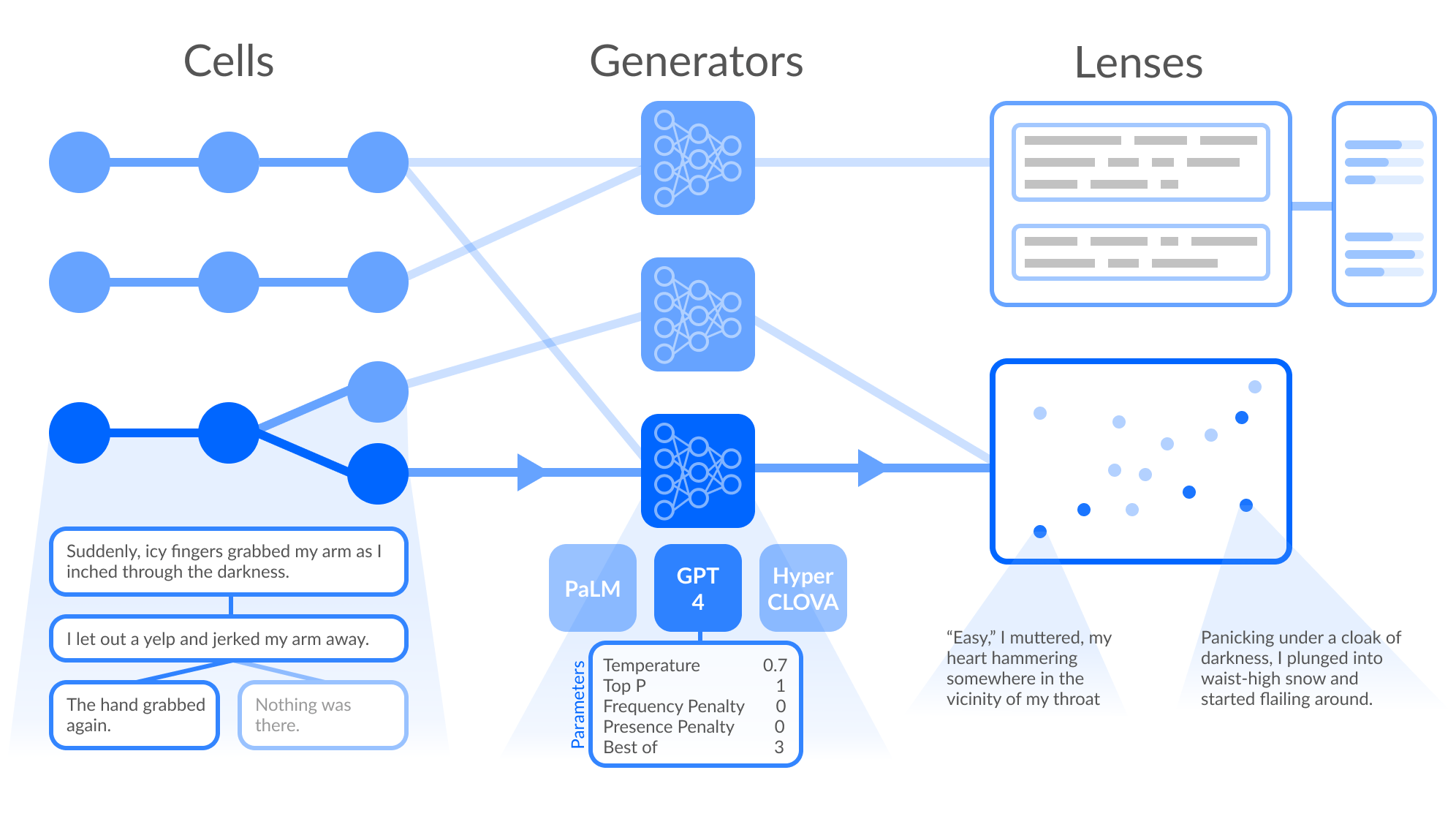

Cells, Generators, and Lenses: Design Framework for Object-Oriented Interaction with Large Language Models

This paper introduces a design framework with three primitives for object-oriented interaction with LLMs: Cells (discrete input units), Generators (model instances), and Lenses (output spaces). By reifying these components into interactable objects, the framework enables users to compose, iterate, and experiment with generative configurations rather than treating LLMs as opaque black boxes.

Papeos: Augmenting Research Papers with Talk Videos

Papeos augments research papers with synchronized talk videos to create a richer reading experience. The novel interface automatically aligns paper passages with video segments, allowing readers to fluidly switch between consuming dense academic text and concise, visual explanations to reduce mental load and improve comprehension.

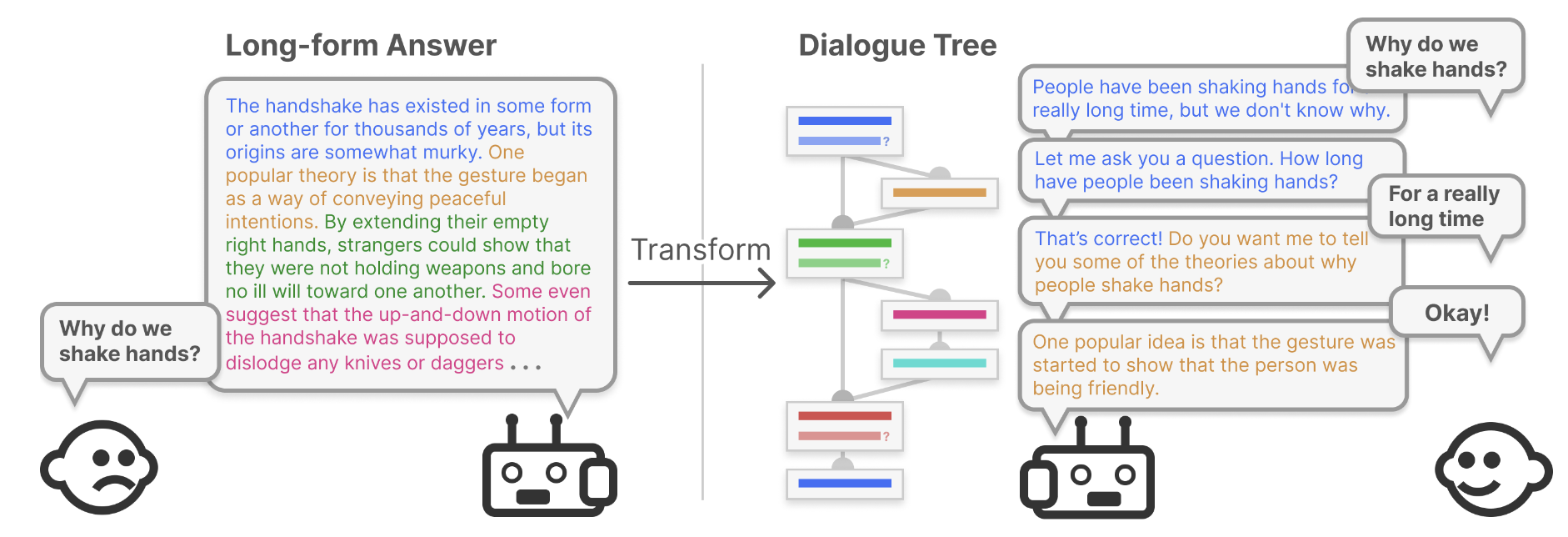

DAPIE: Interactive Step-by-Step Explanatory Dialogues to Answer Children's Why and How Questions

DAPIE is a conversational agent designed to answer children's "why" and "how" questions by transforming existing human expert-authored explanations into interactive, step-by-step dialogues. By converting static text into digestible conversational steps, the system scaffolds learning and encourages children to actively interact and self-assess their understanding.

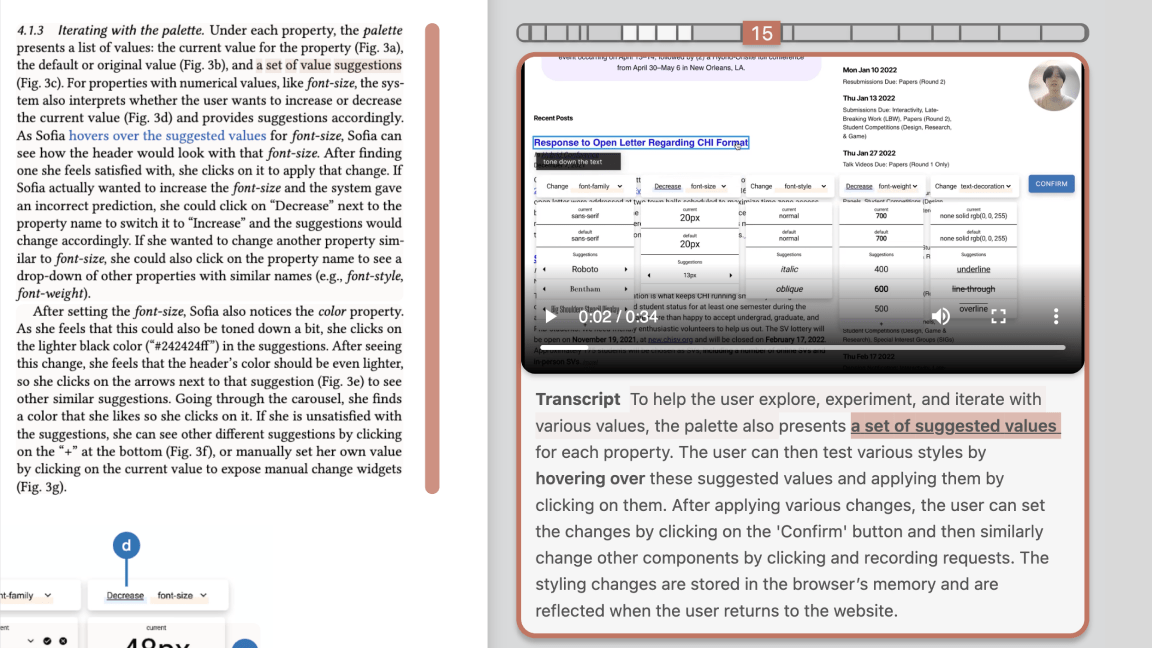

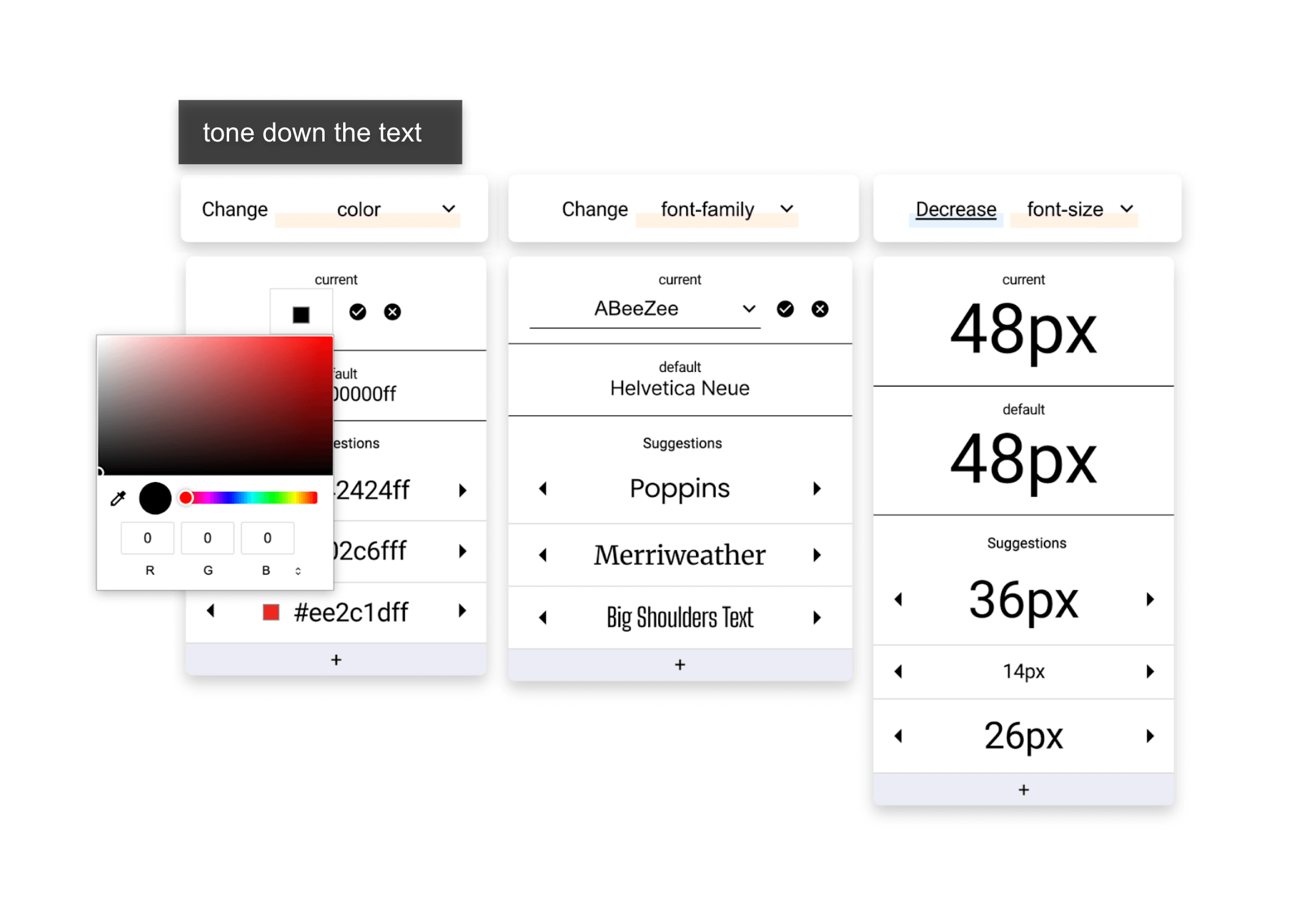

Stylette: Styling the Web with Natural Language

Stylette is a browser extension that empowers novices to style web pages using natural language. By interpreting vague user requests with an LLM and surfacing suggestions from a dataset of 1.7 million web components, Stylette generates a palette of CSS properties and values that users can apply and experiment with to achieve their design goals.

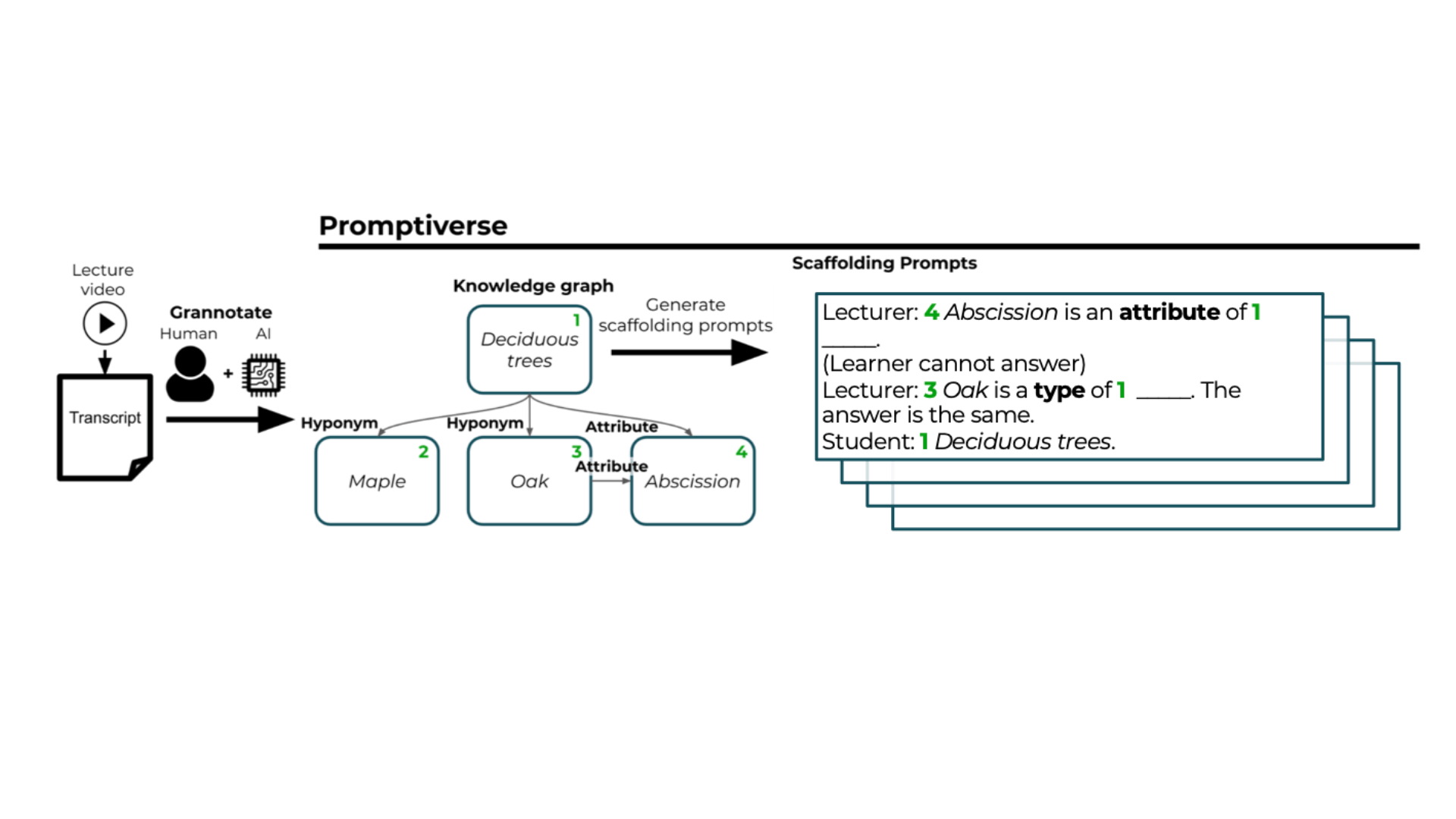

Promptiverse: Scalable Generation of Scaffolding Prompts Through Human-AI Hybrid Knowledge Graph Annotation

Promptiverse introduces a scalable approach for generating diverse, multi-turn scaffolding prompts for instructional videos. Using a human-AI hybrid annotation tool called Grannotate, the system constructs knowledge graphs from video transcripts to efficiently create tailored educational prompts that support varying learner needs.

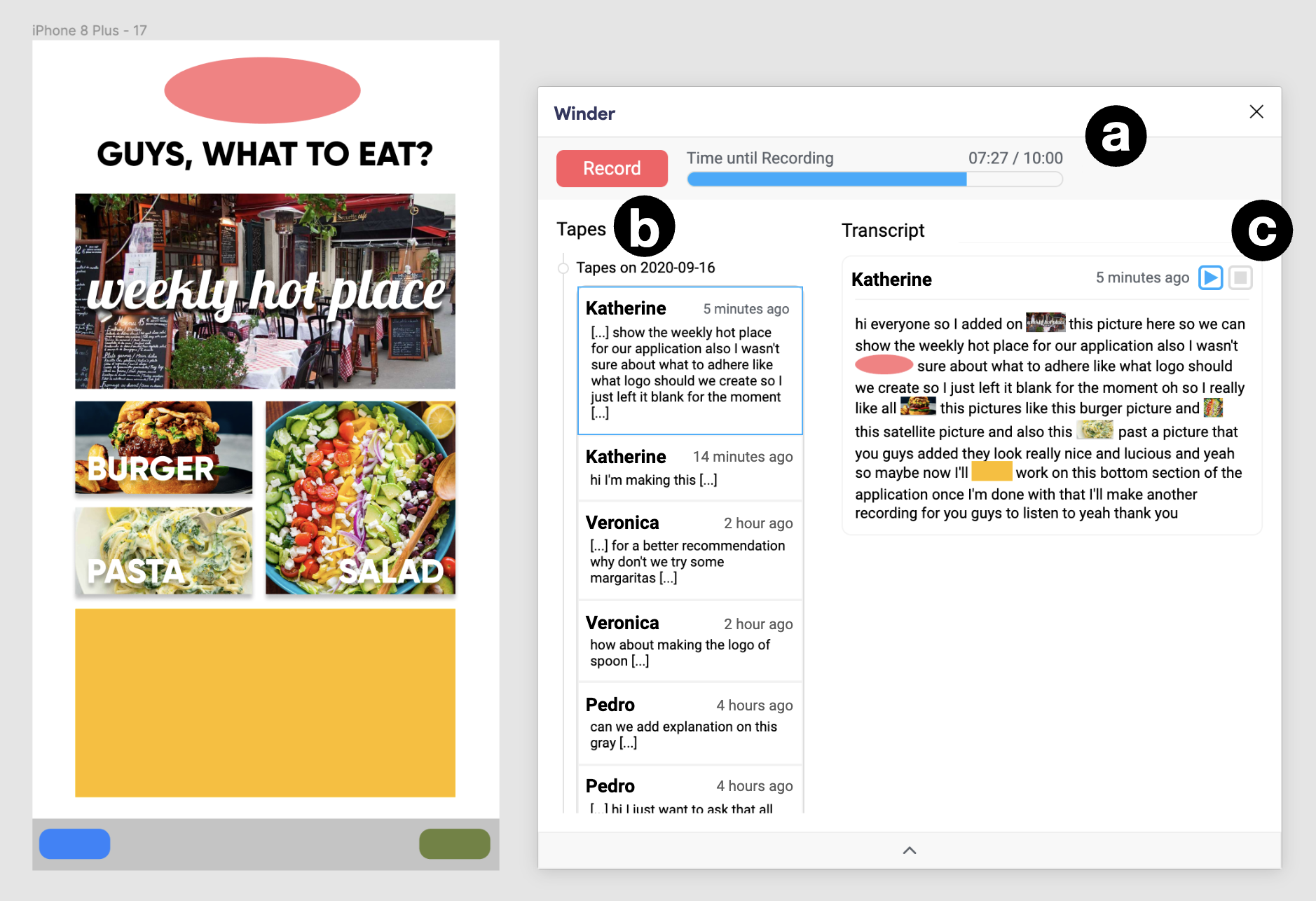

Winder: Linking Speech and Visual Objects to Support Communication in Asynchronous Collaboration

Winder is a design tool plugin that supports asynchronous collaboration by linking speech and visual objects. By allowing users to record multimodal comments, such as voice paired with document clicks, the system generates bidirectional links between the transcript and the UI objects, reducing communication effort and facilitating easier navigation for receivers.

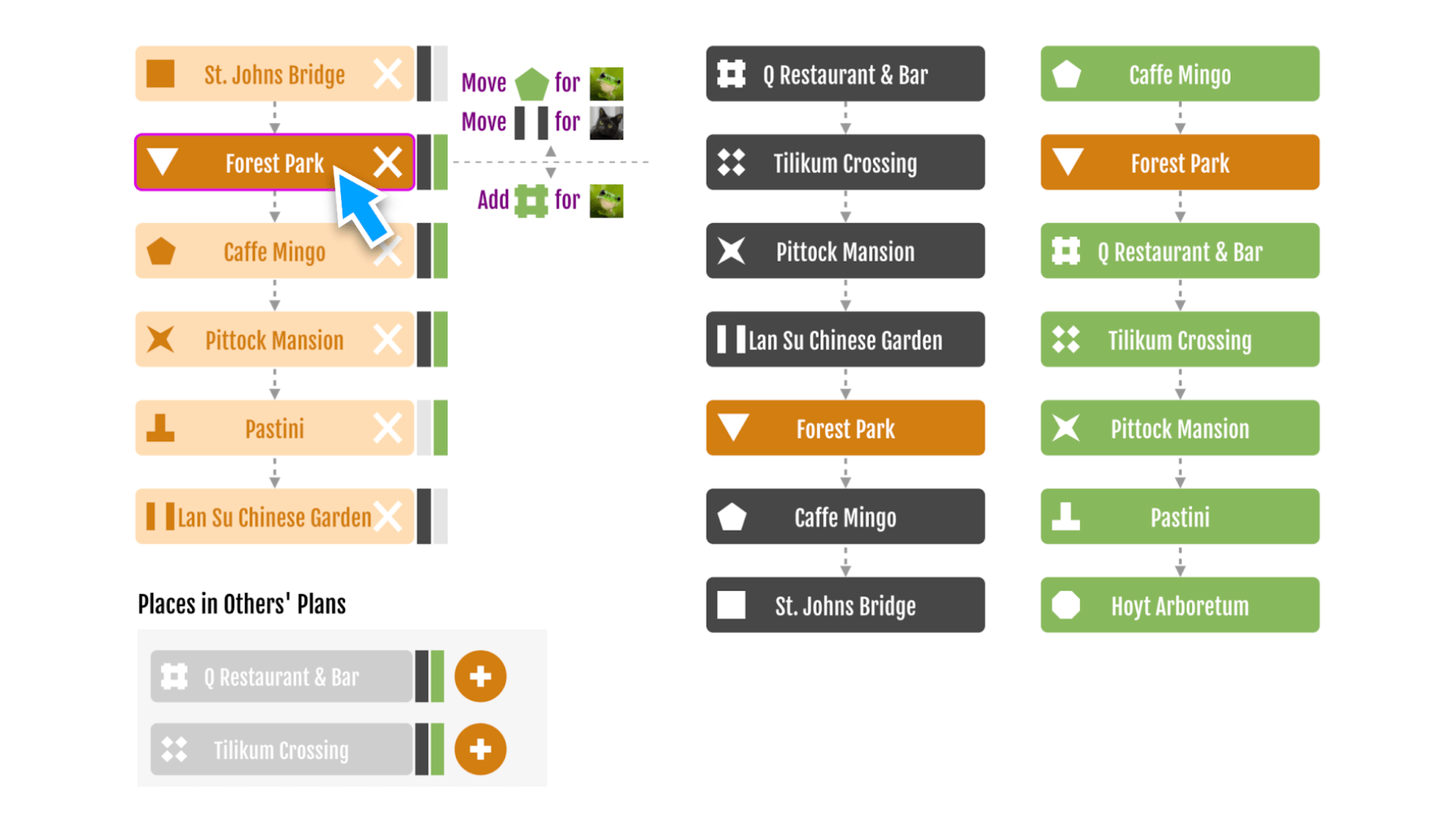

Supporting Collaborative Sequencing for Small Groups through Visual Awareness

This work explores collaborative sequencing (CoSeq) in small groups, such as planning travel itineraries, and introduces visual awareness techniques to support consensus building. Instantiated in a system called Twine, these techniques help group members easily identify agreements and disagreements, reducing the effort needed to communicate preferences and encouraging cooperative behavior.

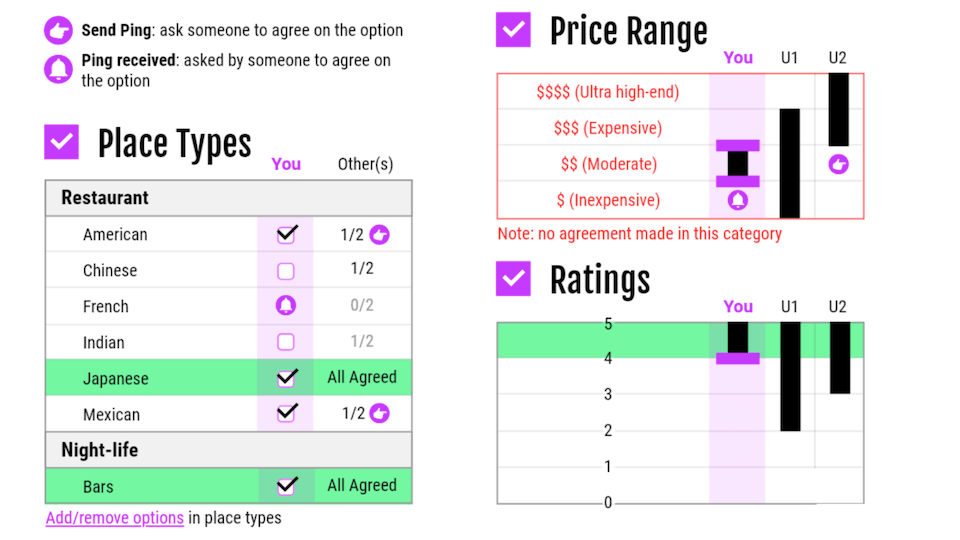

Design for Collaborative Information-Seeking: Understanding User Challenges and Deploying Collaborative Dynamic Queries

This work investigates challenges in collaborative information-seeking (CIS) and social coordination, such as capturing mutual preferences and high communication costs. The work introduces ComeTogether, a system utilizing collaborative dynamic queries to help groups build a shared understanding and streamline the collaborative decision-making process.